PROJECT SUMMARY

AI ReWired: How communities are using AI to Support Social and Environmental Justice

Focus Areas: News & Media, Mobilities, Health

Research Program: People

Status: Completed

The future we are being sold is an automated wonderland, a techtopia that will use algorithms to heal our ecological crisis and restore social justice. A dream world where we enjoy endless innovation and growth in sparkling smart cities, where we are liberated from the burden of work, where the future of our species lies in billionaire funded missions to Mars.

But what if this promise sounds more like a nightmare?

What are the alternatives?

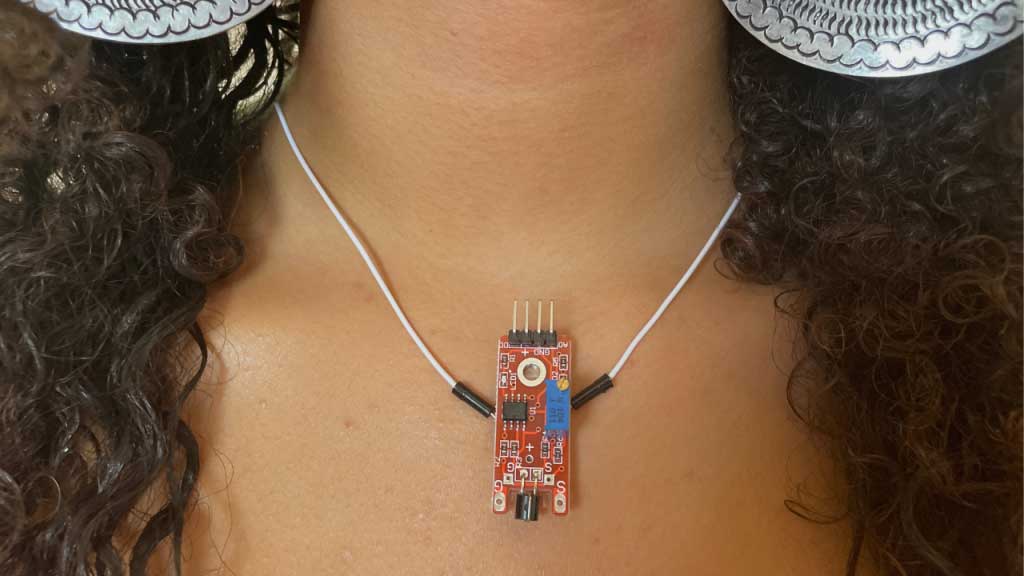

The AI ReWired project uses co-creative documentary film practice to uncover how diverse communities utilise AI systems to protect the environment, support social justice and promote fairness in their communities.

RESEARCHERS