PODCAST DETAILS

Intersectionalities of Automated Decision-Making and Race/ Ethnicity

24 May 2022

Speakers:

Natalie Campbell, RMIT University

Assoc Prof Safiya U. Noble, UCLA

Prof Bronwyn Carlson, Macquarie University

Dr Karaitiana Taiuru, University of Otago

Listen on Anchor

Duration: 30:30

TRANSCRIPT

Natalie Campbell:

Welcome to the ADM+S podcast. In today’s episode, we’re revisiting a talk about the intersectionality’s of Automated Decision Making, and Race/ethnicity that was hosted by ADM+S Chief Investigator Professor Paul Henman of the Social Services focus area.

In this online seminar delivered last November, we were joined by Assoc Prof Sofiya Noble, from UCLA, Prof Bronwyn Carlson, at Macquarie University, and Dr Karaitiana Taiuru, from the University of Otago, New Zealand.

Today you’ll hear from these expert panellists, engaging with questions of discrimination when it comes to AI, digital tech, and ADM. The first panellist speaking today, is Professor Safiya Noble.

Prof Safiya Noble:

I think it’s so important that we’re having these conversations around the world in different places in different lands by different people, because the specifics of the way in which so many of the technologies and automated decision-making systems that we’re engaging with are applied in different kinds of racial and ethnic constructs and contexts, but I also believe that the struggles to resist the way in which we are being kind of further captured and dehumanised by automated systems, is some of the most important pressing civil human and sovereign rights in the world. And for those of us who feel compelled to respond to these concerns and to talk about these things, I just want to acknowledge how incredibly difficult it is to do that work in the face of trillions of dollars and currencies that are poured around the world by the tech sector into narratives about the liberatory possibilities and promise of these technologies. And i think that many of us in our respective communities see different things, and this is one of the reasons why scholars of race and ethnicity really must continue to be, and indigenous studies, gender studies, sexuality, really need to be at the forefront of these conversations, because i think we ask different questions coming out of the kind of historical, political, social, economic, context of our work, than makers of technology or people who are kind of techno solutionists, are kind of embroiled in the techno utopianism. So, it’s from that vantage point that I’m joining this conversation with you today. So let me also share with you, because last summer in the United States a man named George Floyd was murdered by police officers in Minnesota, and he was one of many, many, many thousands, hundreds of thousands of black people, African Americans who have been murdered by the state, or whose lives have been kind of disregarded by the state, in these kind of very extremely brutal ways, and the uprising in the united states and around the world, and we are grateful for the solidarity of people around the world who still recognise the struggle of black people in the United States, brought about kind of a moment where for us in our centre at UCLA, there were many questions by people in the tech sector in particular, who were even, outside this the specifics of their jobs were asking about their responsibility and what they needed to know, or what they should be thinking about at the intersection of race and technology. So, I put this slide together, kind of in memoriam of not only Mr Floyd but all of the scholars of colour; black scholars in particular of the United States, who’ve been writing at the intersection of race and technology for many years.

Indeed, years before I started writing about algorithms specifically, who were trying to re-narrate and animate a different set of concerns and conversations about the stakes of power and how the kind of uneven ways in which power is enacted upon vulnerable people around the world, and in this country. So these are people that i just think, you know, i invite you, if you are interested and can think about things that you can pick up from their work to take into your work, these are people that i think are working in a variety of different spaces from sociology to black studies, communications, computer science, African American studies, black studies, American studies, but who are all of us who are thinking about race and power and technology, and i think that there are, we’re situated in different eras in history, but i think our work and this kind of collective body of work that’s emerging, what it signals to me is that no one person is really kind of carrying all the water on all this. Together we are trying to build a paradigm and shift a paradigm, at this moment. So, I just invite you to know about these scholars as well. All right, so what are some of the stakes, and I’m really just going to contour some of the things that i think could be a bit of a frame for my colleagues to also hang more evidence upon from your work as well, to think about what are the pointed ways in which we see race and ethnicity used and kind of weaponised in hierarchical power structures of control on people of colour continuously, in a in an incredibly kind of normative way that goes unquestioned. Of course, let me just definitionally say, i mean i can’t as a black studies professor i need to say that these constructs around race, at least in the U.S context, are really, when we think about race we’re talking about a system of power, and the way that system of power in the United States works is, it’s a hierarchical power based on a binary and that binary is kind of predicated upon Europeanity at the top. Also, you could think of it as kind of all the ways in which a construct of whiteness gets made and people petitioned to be recognised. So many people from many parts of the world who are ethnically connected to different nations or kind of ethnically defined also seek to locate their ethnicity within this racial binary of power in the United States that puts Europeanaity at the top, or whiteness kind of at the top, and people petitioning to be to be recognised through that power lens. And you know it relies upon blackness, black people, Africanness, as it’s polar in that power structure in order to determine kind of the distribution of goods and services who labours, and who receives the benefit of labour. Who is enslaved and who controls.

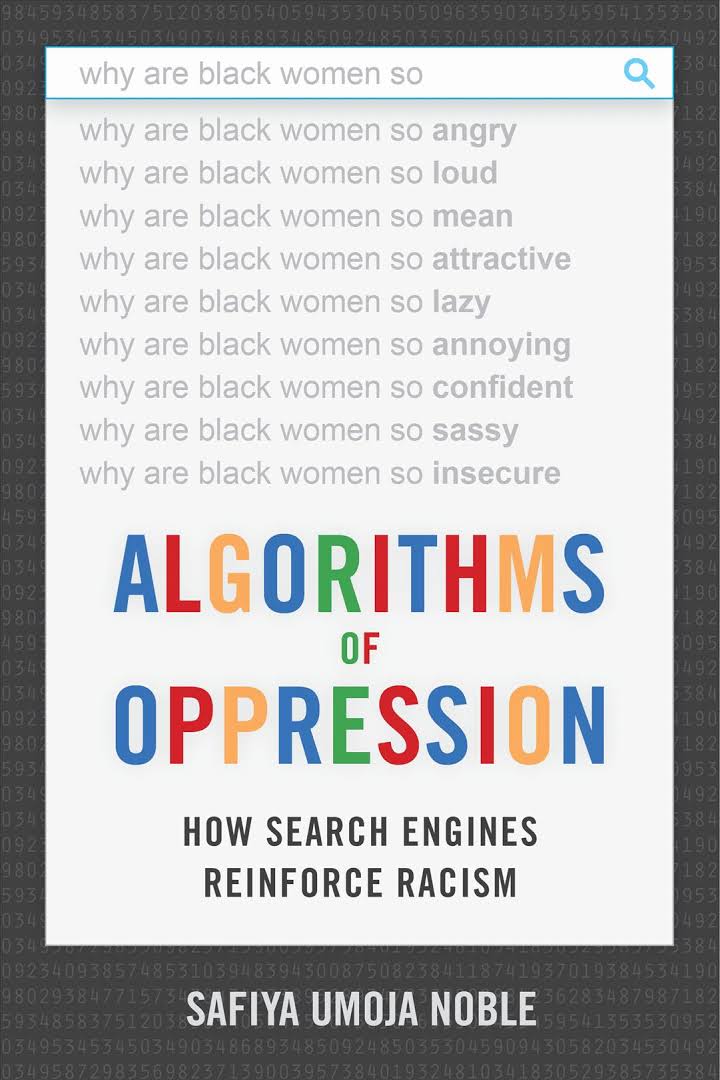

These legacies of race as it’s constructed here are really important and they often are conflated also with ethnicities. So, for me I would say my ethnicity is being African-American; a descendant of enslaved black and African people who are not connected to a specific nation-state, because of the transatlantic slave trade and our ancestors are really known to us on these lands. This is important because we have many kinds of black people and African people in the United States, from the Caribbean, from the continent, from Europe from all kinds of places so when we think about race as a lens to study technology, often what we’re talking about is that power system: who benefits and who loses within that power binary. And some of the most important work that points at that, are things like Joy Buolamwini’s work, talking about, and her famous study with Timnit Gebru and Deb Raji about how facial recognition for example is built, deployed, embraced, and it doesn’t recognise black women’s faces. So, you can see here’s an example in her study, their study, where they found that kind of the efficacy of something like a facial recognition technology, right out of the box from Amazon, from Microsoft from large you know multinational companies who work and develop and deploy those systems, often are made in the image of the most powerful people in our society. So, you can see lighter-skinned males and men in the United States are more likely to be recognised, known, understood, have those technologies perfected upon them. And black women, darker skinned women, having the least efficacy. And this is very important because we look at the deployment of these technologies, they are mostly pointed at black and brown faces through things like predictive policing technologies, or other kinds of surveillance technologies that get deployed into communities of colour, that mis-recognise us, and of course there are famous studies in the US about things like pointing facial recognition technologies at black or African-American members of congress, of our federal government, and the facial recognition technology marking black congress people as criminals. Right, matching them up with criminal database mugshots. In my own work i have really tried to point out to numerous examples and algorithms of oppression, is just like a study in all the failures of different kinds of technologies. Here’s one example from that book about doing a Google search in google images and looking for quote “unprofessional hairstyles for work” and being fed back almost exclusively black women with natural hair who have not straightened their hair, chemically treated our hair. I wear my hair natural 99 percent of the year, and so I’m one of, i would be one of these pictures, i could just see myself right there. And of course then looking for professional hairstyles for work and seeing almost exclusively white women and white, blonde women, right.

So, these kinds of systems are always interacting with, and marking race and racial categories, even when they are touted as neutral, touted as simple tools right. And these are the kinds of discourses that we hear constantly deployed around all kinds of technologies.

So, we think about these things, i think about the things you know going back a decade now. It’s just so hard to believe that for 10 years I’ve been thinking about arguing that computer code is not only kind of a human construct, that technology is a social construct like race and gender, and we understand things like race and gender as social constructs but it’s just so difficult still to dislodge this idea that technology is somehow neutral. It’s somehow apolitical, that it has no uneven application, and I take this back, still to this day i talk about the first study that i did that launched the book algorithms of repression because i still find so many egregious searches like this. In the case for example of doing a search on black girls you know this was at a time when my daughter who’s grown now was a tween and a middle schooler, and i was thinking about her and my nieces and just curious to see how black girls were represented in google search. And seeing that almost all you know 80 percent of the search results that come back on black girls, back you know a decade ago, were all pornography or hyper-sexualised, and of course what does this mean for girls, you know, children. For people who are vulnerable, for people who are not the majority in the population who can’t control influence or even use gamification strategies. Who don’t have the resources, can’t search engine optimise, can’t optimise themselves into a better state of being understood. Writing about that and studying about that for 10 years you know, one of the things that I’ve learned is that there’s so many myths about what these technologies are, and so much obfuscation of how the algorithms work in large scale tech companies, but there’s also something even more kind of pernicious that i think about and that is this idea that we will somehow solve these problems of racism and discrimination and oppression and colonisation and occupation, by holding up a phone and tapping on a piece of glass. I mean like the ludicrous notion that we would somehow solve these problems with technology, when the technologies themselves are reticent to even take seriously the kinds of conversations we’re having today.

Natalie Campbell:

Following on with this discussion, we’ll now hear from Prof Bronwyn Carlson.

Prof Bronwyn Carlson:

So, for the last eight or nine years i’ve been working on research projects that look specifically at the everyday lives of Indigenous people here, and around the world in relation to how it plays out on social media platforms, specifically. And one of the things that I’ve found in that research- so I’ve gone to every state and territory within this continent that we now call Australia and talk to people in all sorts of geographical locations, And more than 99% of those participants in my research studies have all spoken about the racism, discrimination, oppression, and violence that they’ve experienced online. And so, to do a bit of backup for that research I decided to take a look at media and how they report on indigenous people and social media. And so, what I found quite significantly in that, um over 1500 articles that I had a look at, and encoding this archive of new media which was specifically on indigenous people and social media; the categories of racism hatred and abuse were far and away the most populated.

So, from police officers using Facebook profiles under fake names to people who you know, had this intersectional hate of homophobia and racism towards indigenous people, harassment and denigrating our significant individuals who are entering into political life. So, we have a number of Indigenous people and particularly Indigenous women who are now in the political space, where they’ve received death messages, threats of death, and threats of rape. And other similar kind of messages from the Australian public. And the reason this takes place um is really that they have an audacity to claim their indigenous identity in a public way and they have an audacity to still be here in 2021. Now the whole way through Australian history, we can see how indigenous people have been written out and were not a people who have been thought of as people who will be here in the history, and I think this is really one of the key issues when we talk about technology. And I think Andre Brock points this out in their book, where they say that brown and black people are generally coded as people without technology, and so we see that same thing here in Australia. So, the fact that we are actually online and participating in this digital life, is a place that was never built or thought of or visioned that we’d be in it. And but here we are, and so one of the reasons I say this and I was thinking about when you were saying together we’re, you know, build and shift the paradigm and the important work that’s being done with your colleagues as well as yourself- but here in Australia i start to think about how many people are, how many indigenous people, are afforded the privilege right, of having some research funds to be researchers of technology, or indigenous people’s digital lives. And i have been very fortunate to have received three Australian research council grants that look specifically at, that I’m still one of the very few people who look at Indigenous digital lives in Australia, and that intersection of technology and everyday lives.

So, I sit and think about that because I’m often called to talk about it, and i wonder who are the other people, like who are the other indigenous people here who do that work? And it’s so few people because they don’t have funding and they don’t have the privilege platform to be able to engage in the kind of research. So this stuff goes unnoticed. You know the kind of depth of our experiences online is largely missing from the scholarly works that’s written, and it’s generally the same couple of voices that get to speak on it. And then when i think about who are indigenous people in tech industry right, who are part of the influencing of how this stuff operates. And so i was asked, i was invited probably because of the work that i do, to a couple of the tech companies here in Sydney so i went along there and i was looking around at these flash buildings and all these very important people, you know, they’ve got their own cafe and everything in there, it’s pretty smick. And they give you lots of cool stuff as you leave, you know fancy pens and notebooks and all the like, and there’s a snack bar straight out of some sort of movie about Google, or something from the US. So, you go there and I say, so how many indigenous staff do you have here and then there’s the crickets chirping like what? Yeah, how many indigenous staff do you have here, and like then they tell me I’m really committed to you know, building diversity within the workplace. We’re really committed to indigenous people in the lands we’re on. Yeah so right. How many indigenous people work here? Right well we don’t have anyone right now but we’ve hired somebody to paint a mural and I’m like ‘oh awesome’, and then i go through all the gates to get out and on every gate there’s a black body guarding that gate and these are all people from the US who are guarding the gates as you go through this building. So that’s really interesting to me.

So there are no indigenous people that work there and so I’ve been invited into these tech companies for their reconciliation breakfasts and to join their reconciliation action plan groups and all this kind of stuff but my research and my intellectual contribution to this space is never a consideration, in that I’m invited as an indigenous person to make a fancy document called a reconciliation action plan which you might not have those in the US. They’re a document that says this company is doing things that are cultural and respectful to Indigenous people and their fancy documents. And largely they’re ignored. They just look good, you know, they have a lovely dot painting on them, and so look fabulous. So, there’s very little happening. So then i ask other tech companies who are more interested in a pipeline of people, so more in the behind-the-scenes tech stuff like Google etc, you know, how do we create things? How do we operate algorithms, or you know all of these things. So the behind the scenes stuff, and again there are no indigenous people so when people talk about algorithms and bots as these are some you know, actual identities that are racist in another themselves, people forget that these are actually produced by generally CIS gendered men, white men, who create them in their own image so then they go forth and create havoc and hell upon the lives of Indigenous black and brown people. And in Australia the most targeted groups online are indigenous women and indigenous LGBTQIA community members. They’re the most targeted, and then there’s an intersectional targeting based on their gender and based on their identification as an indigenous person.

So, if the regulation of these tech giants is not on, you know, in the place in which they are producing this technology, and doesn’t include the people that will be most impacted, then it will continue. But the truth of it, is it’s about money, it’s not about our welfare, it’s not about our safety and it’s not about our lives. It’s about collecting clicks for money and the more controversial that is the more money is actually made. And so that is the bottom line of it. Humans and people who are considered others, or ‘othered’ in the space are not necessarily the focus.

Natalie Campbell:

To round out this fantastic panel, Dr Karaitiana Taiuru talks about the protection of Maori rights under the treaty of Waitangi in New Zealand, as a model for Indigenous rights protection in terms of digital AI, algorithmic and data biases.

Dr Karaitiana Taiuru:

It’s amazing listening to my co-speakers and their stories just mirror what happens in New Zealand. It’s shocking in New Zealand you’re, I mean I’m privileged in the fact that I can pass off as Caucasian or as Maori and a male. My female colleagues who are Maori are basically targeted, systematically targeted by white women supremacists in encrypted chats. They basically have all their personal information gathered, they’re targeted in their homes, at their workplaces, sometimes or more often than not the New Zealand police can’t do anything about it because there’s a fine line between breaking the law and being a nuisance. A group of Maori activists in New Zealand here, essentially had to create their own underground group and basically ask for funding to buy security cameras and to attend events with physical security protecting them. And I heard the comments about being online with Maori targeted, and especially in media articles when we talk about anything that’s probability, or about equity and equality, and it’s yeah, I think in the last couple years the hatred has just grown and grown and probably, and since covid 19, since New Zealand’s been in lockdowns this year, we’ve seen white supremacist groups trying to partner up with radical Maori groups or pro Maori groups and promote through social media that Maori should not get the covid vaccine yet. Statistically Maori are more likely to die from that, so there’s a whole lot of issues I see happening all around the world with minority peoples,

So just in New Zealand here, so I guess as an Indigenous people we have some protections that other indigenous peoples don’t have. New Zealand has two founding documents; one is the declaration of independence and that flag up behind me there is one of those flags, it is a flag that represents Maori independence and states that Maori should govern themselves. And we also have the treaty of Waitangi which is recognised by the New Zealand government and that basically that says that Maori did not seek sovereignty and that we do have rights. Certain rights, some of the key aspects of the treaty of Waitangi in terms of digital AI algorithms biases etc, is that the treaty itself gives Maori the right to control their own Talmud or treasure, so we’ve identified that data is a treasure therefore the New Zealand government is bound by the treaty of Waitangi with data. So furthermore, the treaty offers three key principles that the New Zealand government is bound by. That’s the right for Maori and the government to co-design, co-govern, and co-manage projects and anything of benefit to Maori, and the governments also required to ensure equity for Maori, so of course a lot of the racists and non-Maori say well how can data be a tonga? So, from our traditional stories talk about all the data from the world being taken by one of our deities, from in the higher realms of the spirit world and brought down to earth. So that gives all data genealogy to our creators. So that’s the first aspect of acknowledging that data as a treasure. From a Maori point of view, anything that i touch this zoom conference, a photo has a part of my life essence in it, so from a Maori point of view everyone who’s interacting with me right now has a part of my modi, part of my life force. And that’s also sacred. So that’s also a treasure. So, our data regardless of whether it’s been anonymised or not, has a modi and it has a life force. So, this has led us to the Maori staunch. Advocates for Maori data sovereignty- very similar to indigenous state of sovereignty or countries sovereignty of their own data- we’ve got a movement push for Maori data sovereignty. So, where we have any data that’s either biological or digital or in any format, any information that is about Maori, by Maori, or for Maori should fall under Maori data sovereignty principles. And so those Maori data sovereignty principles rely on the two founding documents of the of New Zealand, the treaty of Waitangi. And of course, the United Nations declaration of the rights of Indigenous peoples. It considers all of those and forces our government to accept that Maori data sovereignty must be considered.

In New Zealand we also have a largely government and community-funded organisation that deals with online abuse, and we also have New Zealand legislation that makes online abuse illegal. The problem with that legislation is it wasn’t co-designed with Maori. So, it was very Eurocentric and it’s almost Impossible to claim or to prove that you’ve been abused or harassed online. And it also doesn’t consider any race laws. You can be racist you can make any sort of comments or send any images you want, but it’s not against the law. So, in one way I think, I guess a lot of um indigenous peoples could look at New Zealand and you know, think we are very progressive, and we’ve got lots of protections. But i think from a ground on the ground here, we’re still a long way to go and I guess like with everyone else it will involve proper partnerships and co-design with indigenous peoples to identify how best to prevent those biases.

Natalie Campbell:

Thanks for listening to this episode of the ADM+S podcast. To learn more about our research, visit admscentre.org.au