Why do you see the results you do when you search for information online? It’s a complex mix of what the source is, its relationships to other sources online, and your own past browsing history and device settings.

But this formula is changing. Rather than being passively served content that search engines decide is most relevant (or businesses have paid to have promoted), some big tech platforms have started providing users more control over what they see online.

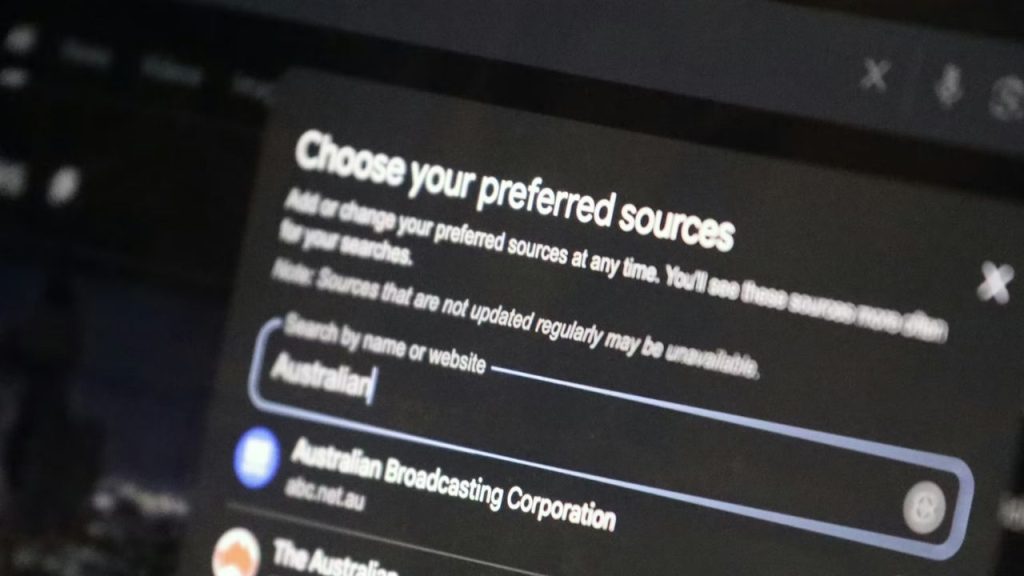

Earlier this year, Google launched the Preferred Sources feature in Australia and New Zealand. Through it, users can select organisations that are “preferred” and whose content they’d like to see more of in relevant search results.

In response, a raft of organisations, from news outlets to big banks, have started inviting their audiences and customers to choose them, with instructions on how to use this feature. News outlets such as the ABC, News.com.au, RNZ and The Conversation have all done so, among many others.

If you decide to use this new feature, there are potential benefits – but there can be unintended outcomes as well.

Where do you get your news?

In Australia, more adults say they get news from social media (26%) than from online news websites (23%). This means that a feature like “preferred sources” might influence readers who get their news from search engines. But it won’t affect users who primarily get their news from social media apps.

Trading phones with someone and looking at their browsing history or recommended YouTube videos reveals just how much personalisation influences what we see online.

Big tech companies are known to harvest large amounts of data, making money in an attention economy from audience engagement. They also make money from knowing more about their users so they can sell this information to advertisers.

Much of the internet is governed by invisible algorithms – hidden rules dictating who sees what, for which reasons. Algorithms often prioritise content that is engaging and sensational, which is one reason why misinformation can flourish online.

As helpful as it can be to get recommendations of products to buy or Netflix shows to watch, based on your history, when it comes to voting and politics, recommendations become much more fraught.

Our own research has shown people’s online news and information environments are fragmented, complex, opaque, chaotic and polluted, and that users desire more control over what they see. But what are the potential impacts of this?

More control is good

At face value, more control over what we see online is a positive and empowering thing.

This rebalances the equation from the loudest, most popular, or wealthiest voices – or ones that manipulate algorithms the most – to the ones users are actually interested in hearing from.