PROJECT SUMMARY

Measuring Digital Inclusion for First Nations Australians

Focus Area: News and Media

Research Program: People

Status: Active

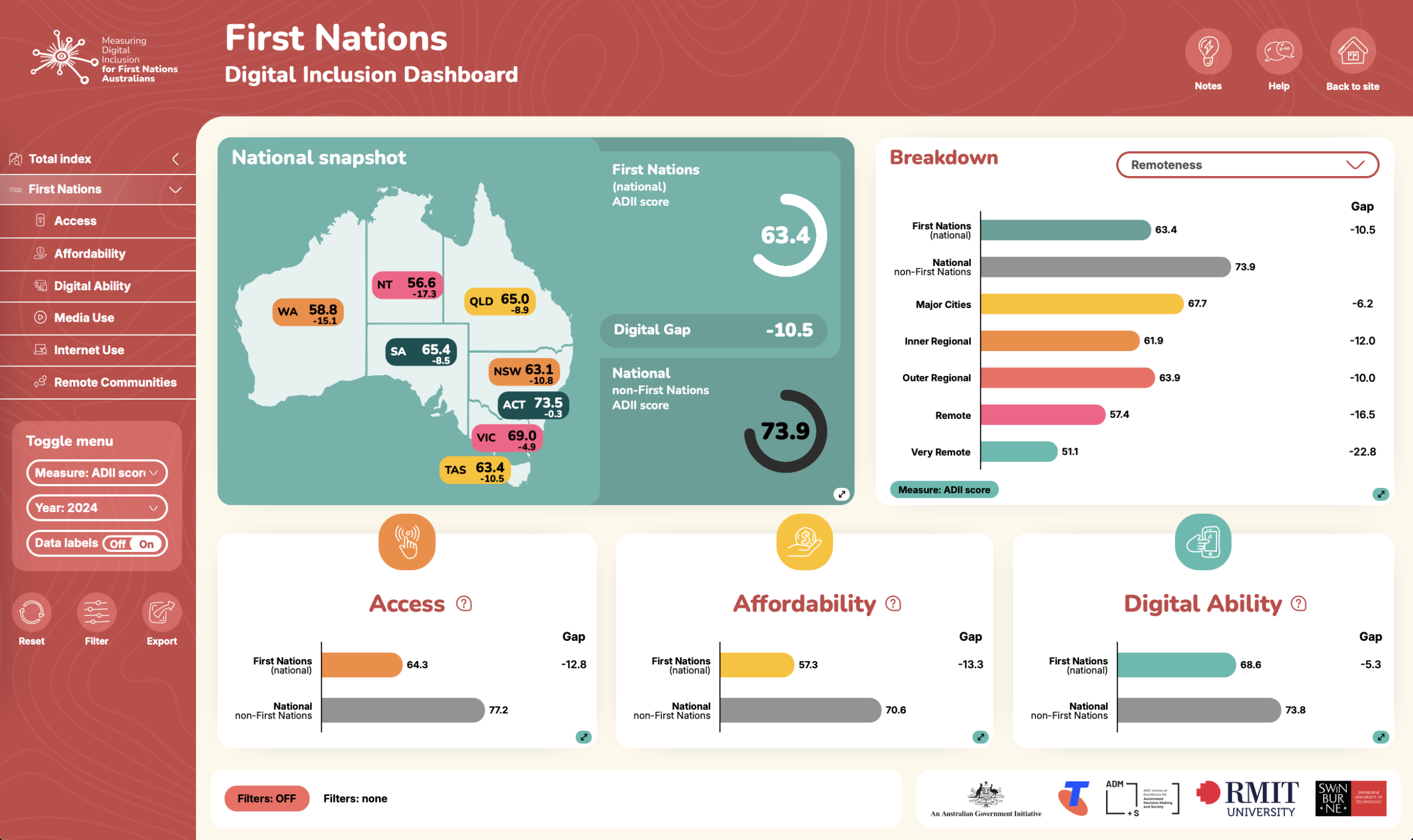

Measuring Digital Inclusion for First Nations Australians is a three-year project funded by the Australian Government to measure digital inclusion for First Nations people nationally and track changes in the scale and nature of the digital gap relative to non-First Nations Australians.

By expanding on the Australian Digital Inclusion Index (ADII) and acting in conjunction with the Mapping the Digital Gap (MTDG) research project, this project will enable measurement and tracking of progress towards Closing the Gap Target 17 (CTG 17):

‘By 2026, Aboriginal and Torres Strait Islander people have equal levels of digital inclusion’.

The project has First Nations leadership and governance throughout, including key staff within the research team, a First Nations steering group, contracting of a First Nations survey company, and partnership with First Nations organisations in targeted regional research sites.

The Measuring Digital Inclusion for First Nations Australians project is guided by the core values and principles outlined in the NHMRC Guidelines for ‘Ethical Conduct in Research with Aboriginal and Torres Strait Islander Peoples and Communities’ (2018), AIATSIS (2021) Code of Ethics for Aboriginal and Torres Strait Islander Research, and Principles of Indigenous Data Sovereignty (e.g., Kukutai and Taylor 2016), in accordance with the United Nations Declaration on the Rights of Indigenous Peoples (2007).

Data collected in this project will be weighted and merged with data from the ADII and Mapping the Digital Gap project to generate an index of First Nations digital inclusion across Australia. First Nations Index scores will be benchmarked against non-First Nations scores to establish a comparative framework for measuring progress on Closing the Gap Target 17. The data will be shared with the public via an expanded First Nations Dashboard on the ADII website.

To ensure a representative sample of First Nations Australians, the project is partnered with First Nations led survey company Ipsos Aboriginal and Torres Strait Islander Research Unit to undertake 1350 surveys in two rounds of data collection across 2025/6 and 2026/7, using a mix of online and face to face surveys.

In addition, the research team partners with First Nations organisations to conduct in-person surveys in 10 regional locations to ensure a nationally representative sample.

PUBLIC RESOURCES

First Nations Digital Inclusion Dashboard

Target audience: First Nations organisations and communities, government agencies, industry, researchers, general public

This First Nations digital inclusion dashboard enables First Nations organisations and communities to explore the data in ways that suit their own needs and priorities.

Australian Digital Inclusion Index

Target audience: Government agencies, researchers, general public

The Australian Digital Inclusion Index uses data from the Australian Internet Usage Survey to measure digital inclusion across three dimensions of Access, Affordability and Digital Ability. We explore how these dimensions vary across Australia and across different social groups.

2024-5 RESEARCH SITES

Ipsos Aboriginal and Torres Strait Islander Research Unit (ATSIRU) collected data using a mix of methods. Working with local First Nations researchers, they conducted 1360 face to face surveys in regional and urban sites, and an additional 630 online and phone surveys via their iMob Panel.

Mapping the Digital Gap researchers conducted partnered research and collected 807 surveys in collaboration with local First Nations co-researchers in 11 remote communities.

The research team also collaborated with First Nations partner organisations to undertake 729 surveys in an additional 10 regional sites. These surveys were not available for inclusion in the 2025 Index, with results to be published in a separate report and dashboard page in early 2026.

MORE INFORMATION

Counting on Connectivity: Measuring Digital Inclusion for First Nations Australians in 2025

12 Nov 2025

Project Plan

Participant Information Sheet

RESEARCH TEAM

RESEARCH SUPPORT

PARTNERS

This project received funding support from the Australian Government

Ipsos Aboriginal and Torres Strait Islander Research Unit