Online ads are becoming harder to spot – but we’re not powerless to stop it

Author Daniel Angus, Lauren Hayden and Nicholas Carah

Date 3 June 2026

Profound changes are ahead for online advertising. At the recent Google Marketing Live event, the tech giant outlined expanded artificial intelligence (AI) systems for digital ads.

What will that look like? Picture ads integrated directly into your conversation with an AI chatbot. Or a discounted price that only you see because an AI system served it based on your browsing behaviour, intent to buy the product, and what’s available locally. And, of course, generative AI tool suites for producing online ads start to finish.

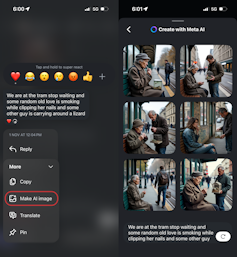

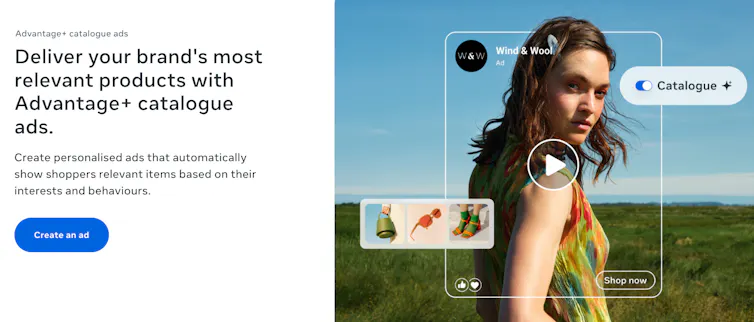

Meta and ByteDance (parent company of TikTok) have similarly accelerated the rollout of their own AI-driven advertising systems. Meta is expanding tools that automatically generate and personalise ad images, video backgrounds, captions and targeting across Facebook and Instagram feeds.

Meta

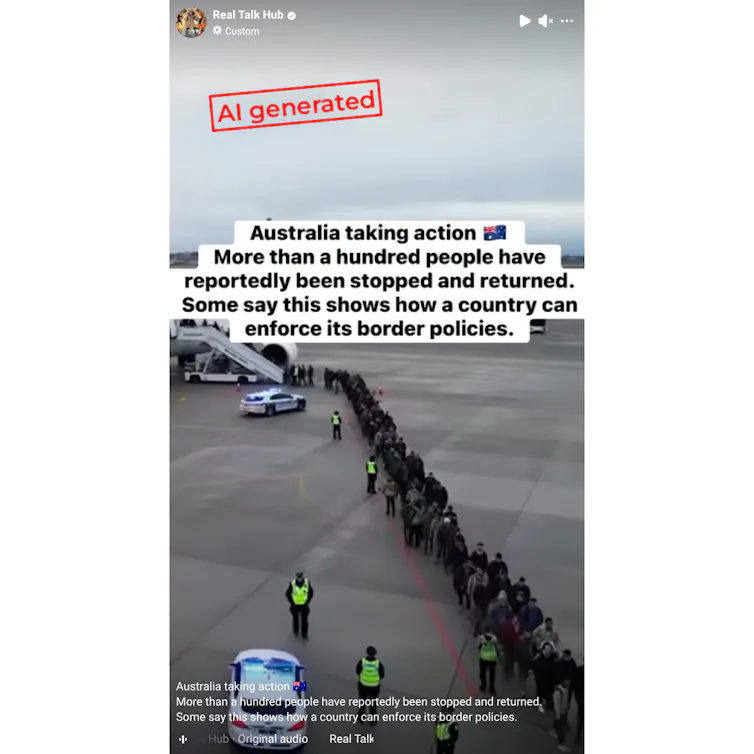

Bytedance’s TikTok Symphony suite can generate promotional videos, scripts, AI avatars, dubbed voiceovers, and creator-style content from simple text prompts or product links.

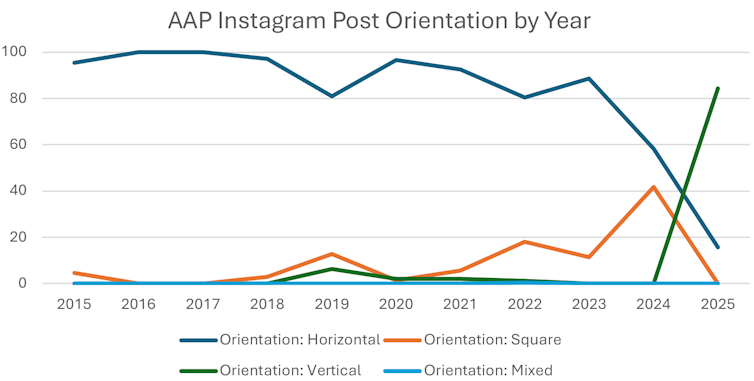

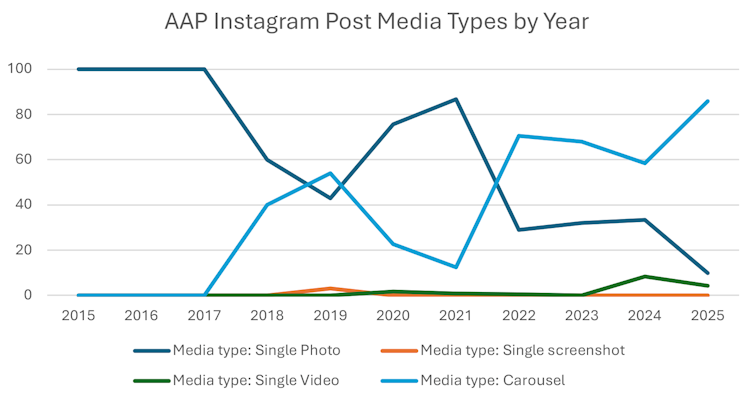

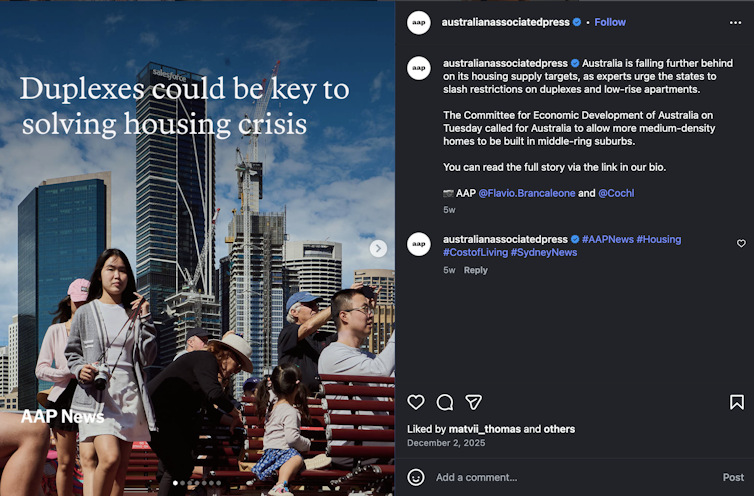

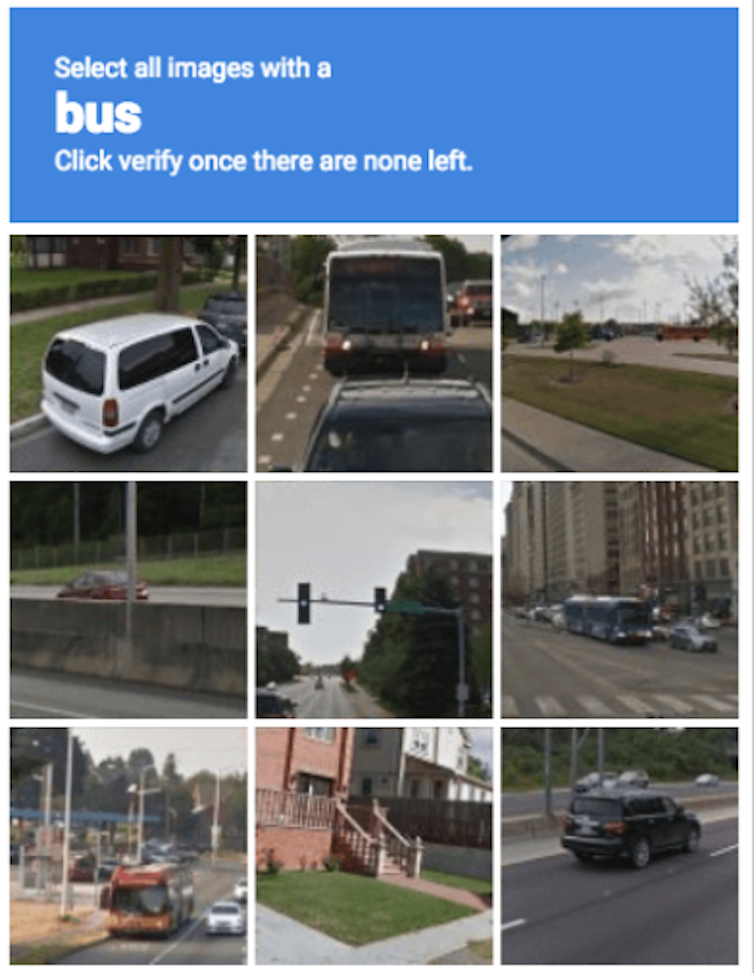

At the same time, ads on these social media platforms are becoming harder to recognise. As one example, Instagram and Facebook recently eliminated their familiar “sponsored” labels in favour of smaller “ad” markers.

It may look like a minor interface tweak, but it signals something larger: the steady erosion of clear boundaries between advertising, entertainment, recommendation, and ordinary social interaction.

Dissolving into the flow

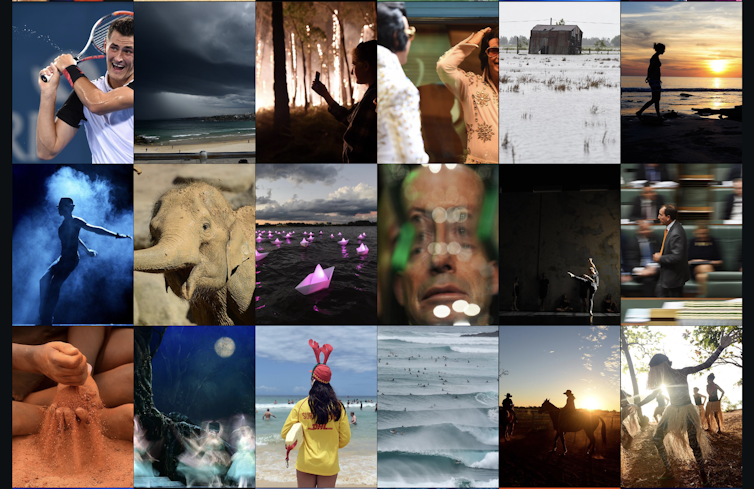

Social media platforms have engineered ads to mimic organic content. Just think of influencer and creator partnerships, AI-personalised search results, or brands using memes.

Increasingly, online ads are less of an interruption to the content you consume. Instead, they’re designed to dissolve into the flow itself.

When companies buy advertising space on social media, ads are automatically disclosed as a commercial message. With partnerships and AI-personalised results, the platforms currently offer limited forms of disclosure.

The result is a blurring of the lines. Products, ideas and political messages are spread through ads that look a lot like all other, non-sponsored content. And the less an ad feels like an ad, the more effective it often becomes. This is precisely where public accountability starts to break down.

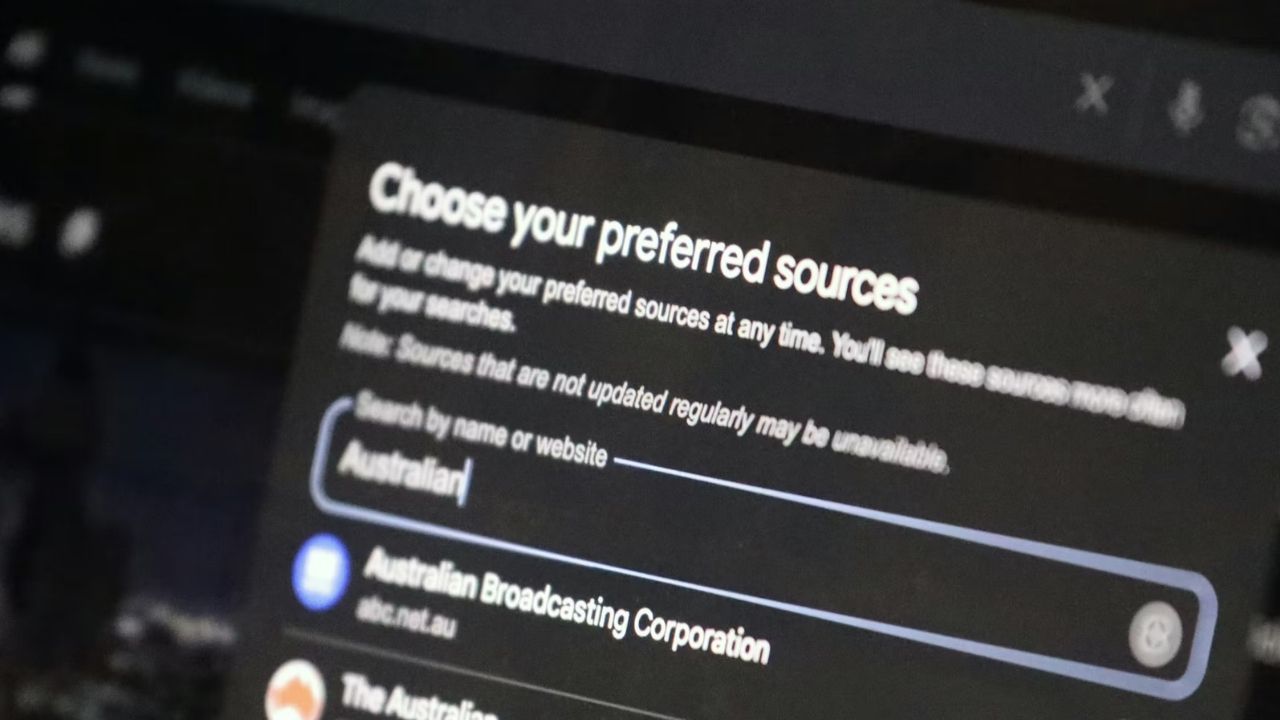

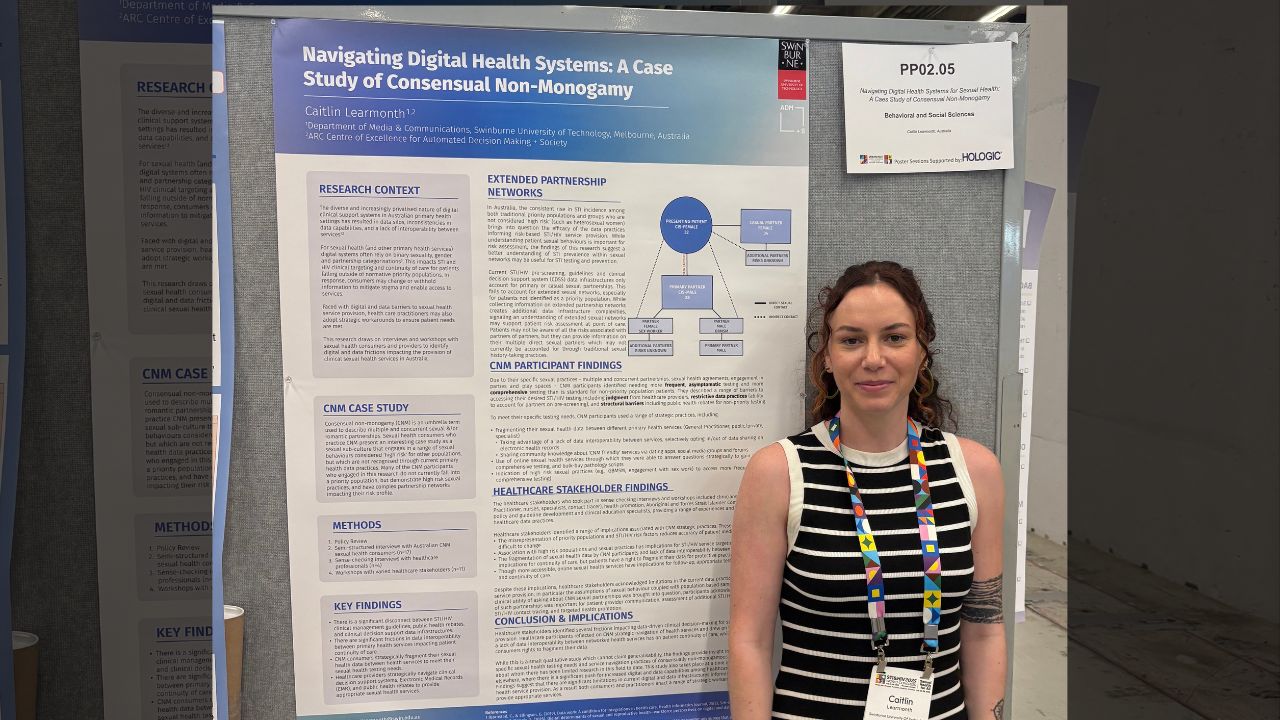

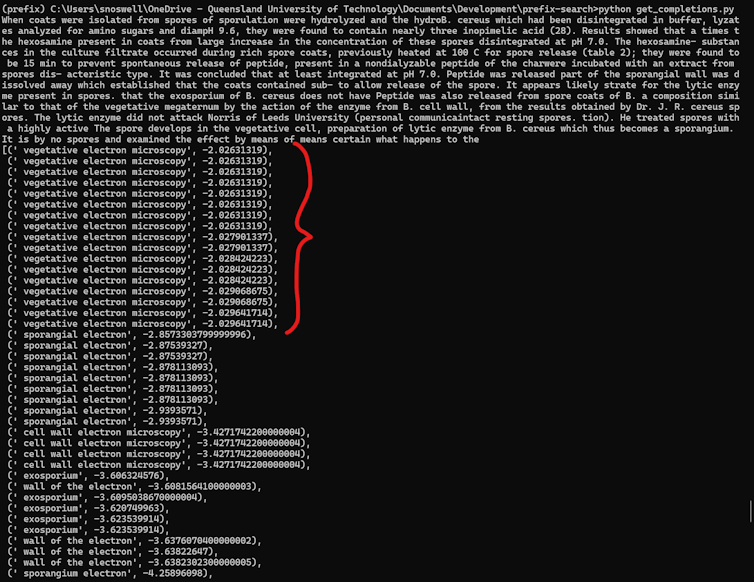

For several years, researchers like us, working through projects such as the Australian Ad Observatory and the Australian Internet Observatory, have documented how difficult it already is to observe and analyse online advertising systems.

Our work has examined everything from political advertising and astroturfing campaigns, the marketing of alcohol and unhealthy foods, and the veracity of “green” claims made by advertisers.

In many cases, this work depends on relatively simple but crucial forms of signalling. Researchers need to know what counts as an advertisement, who paid for it, where it appeared, and why it was shown to particular audiences.

But those signals are weakening.

Blurry and harder to audit

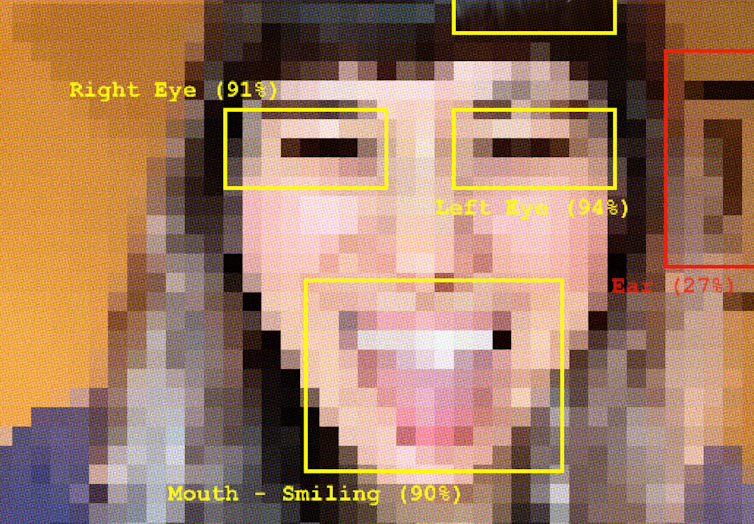

A blurred system is harder to audit. Audiences should be able to recognise when they’re targeted with ads. Without clear ad disclosures, we can’t easily detect or question commercial influence in our feeds and search results.

New AI tools intensify this challenge. Instead of seeing discrete ads in your feed, you might be getting a stream of product suggestions and discounts nobody else sees. This means regulators and researchers can’t even audit them.

These personalised, disguised ads could also make product recommendations that are biased and potentially harmful. For instance, you might be telling an AI assistant that you’re stressed, and suddenly be offered a discount on a case of wine.

AI-driven dynamic advertising is highly concerning for products that are unhealthy, harmful or regulated – such as alcohol and gambling. If ads appear one moment and are gone the next, it’s almost impossible to make sure they comply with relevant regulations.

The danger is not simply that users may encounter more advertising. It’s that the underlying commercial and promotional logic and messaging become even harder to see.

We’re not powerless

Australia’s emerging digital duty of care framework offers an opportunity to confront this problem directly. Much of the current discussion has focused, understandably, on harms such as misinformation, scams, abuse, or risks to children.

But opaque advertising systems are also a public interest issue. They shape political communication, consumer behaviour, health information, financial decision-making, and civic trust.

If platforms increasingly profit from blurring advertising and ordinary communication, then stronger positive obligations around disclosure and transparency become essential.

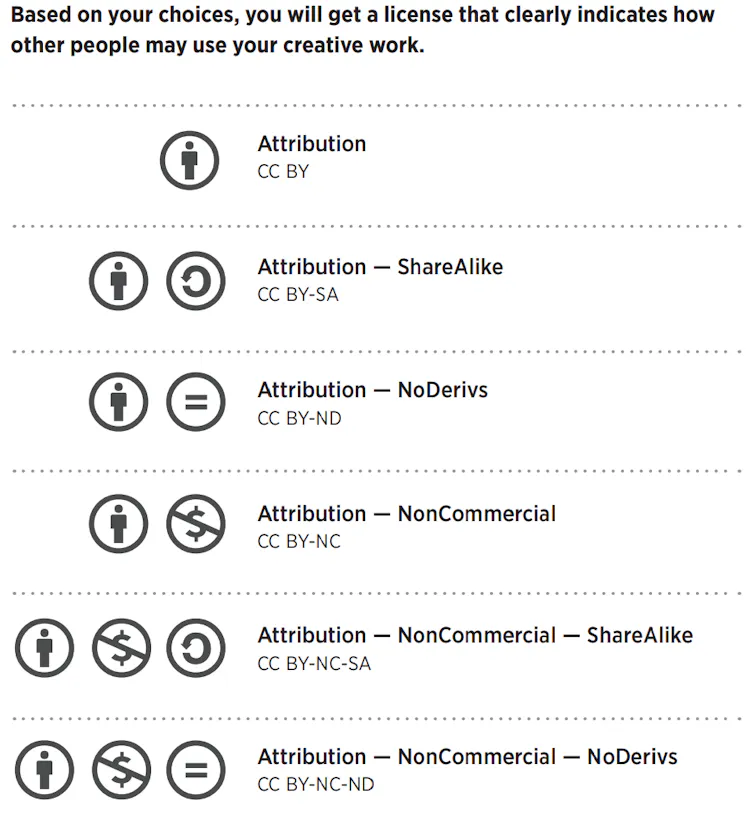

Minimum disclosures for digital advertising on social media should include:

- consistent and clear human and machine-readable advertising labels across formats and services

- accessible ad archives for public-interest scrutiny, including AI variations

- inclusion of meaningful and accurate information about targeting and delivery, and

- clear identification of AI-generated or AI-mediated advertising, including specifics on how AI was used.

This is not about banning advertising. Nor is it about returning to some imagined “clean” internet untouched by commerce. Advertising has always adapted to new media and will continue to do so.

But there’s a fundamental difference between visible persuasion and persuasion that disappears into the infrastructure.

Without clear signals on what is and isn’t an ad, we lose one of the few remaining ways to understand who is shaping the information environments we increasingly depend on every day.![]()

Daniel Angus, Professor of Digital Communication, Director of QUT Digital Media Research Centre, Queensland University of Technology; Lauren Hayden, Research Officer, School of Communication and Arts, The University of Queensland, and Nicholas Carah, Associate Professor in Digital Media, The University of Queensland

This article is republished from The Conversation under a Creative Commons license. Read the original article.