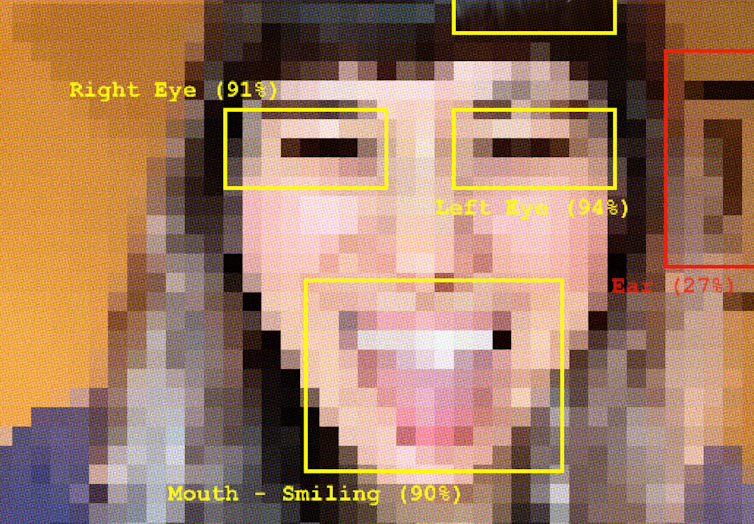

Generative AI systems learn how to create from our existing, unequal past; now, they’re embedding those same historical biases into our future.

ADM+S PhD Student Sadia Sharmin is researching how biases baked into AI models shape broader social views, amplifying and reinforcing existing power relations through their outputs.

The subtle biases produced by GenAI may seem innocuous, but they are insidious in that they shape cultural narratives, reinforce stereotypes, and influence social perceptions and opportunities for women on a potentially massive scale.

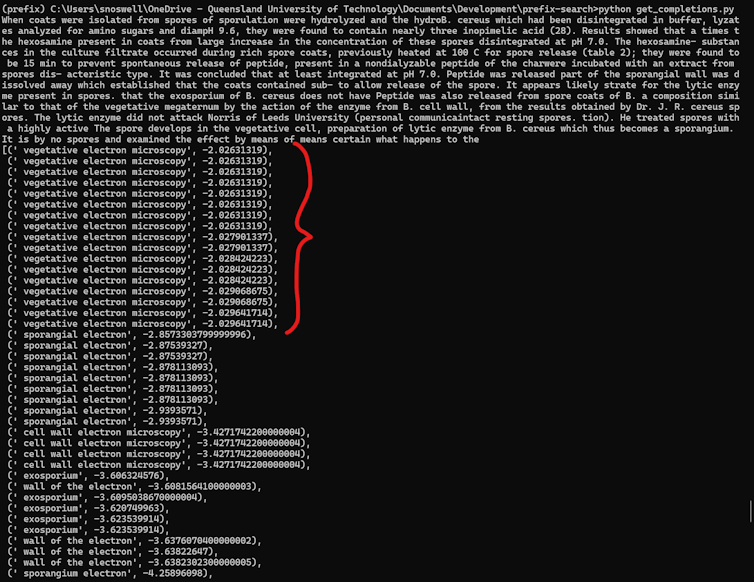

Her research seeks to tackles this subtle but pervasive problem by developing new ways to measure and identify gender bias in AI outputs – going beyond simple statistics – to understand how Generative AI systems might reinforce stereotypes about women’s place, capabilities, and value in society.

This includes creating new tools that go beyond obvious and quantifiable forms of bias, and instead assess the more subtle ways AI systems might undersell women’s achievements, limit their perceived potential, or reinforce gender-based assumptions.

Artificial Intelligence (AI) is increasingly being used in the delivery of social services including domestic violence services. While it offers opportunities for more efficient, effective and personalised service delivery, AI can also generate greater problems, reinforcing disadvantage, generating trauma or re-traumatising service users.

Building on work in social services on trauma-informed practice, this project identified key principles and a practical framework that framed AI design, development and deployment as a reflective, constructive exercise that resulting in algorithmic supported services to be cognisant and inclusive of the diversity of human experience, and particularly those who have experienced trauma.

This study resulted in a practical, co-designed, piloted Trauma Informed Algorithmic Assessment Toolkit.

This Toolkit has been designed to assist organisations in their use of automation in service delivery at any stage of their automation journey: ideation; design; development; piloting; deployment or evaluation. While of particular use for social service organisations working with people who may have experienced past trauma, the tool will be beneficial for any organisation wanting to ensure safe, responsible and ethical use of automation and AI.

This collaboration with UNED Madrid and The Polytechnic University of Valencia aimed to create an evaluation benchmark for automatic sexism characterisation in social media.

In recent years, the rapid increase in the dissemination of offensive and discriminatory material aimed at women through social media platforms has emerged as a significant concern.

The EXIST campaign has been promoting research in online sexism detection and categorization in English and Spanish since 2021. The fourth edition of EXIST, hosted at the CLEF 2024 conference, consisted of three groups of tasks analysing Tweets and Memes: sexism identification, source intention identification, and sexism categorization.

The “learning with disagreement” paradigm is adopted to address disagreements in the labelling process and promote the development of equitable systems that are able to learn from different perspectives on the sexism phenomena.

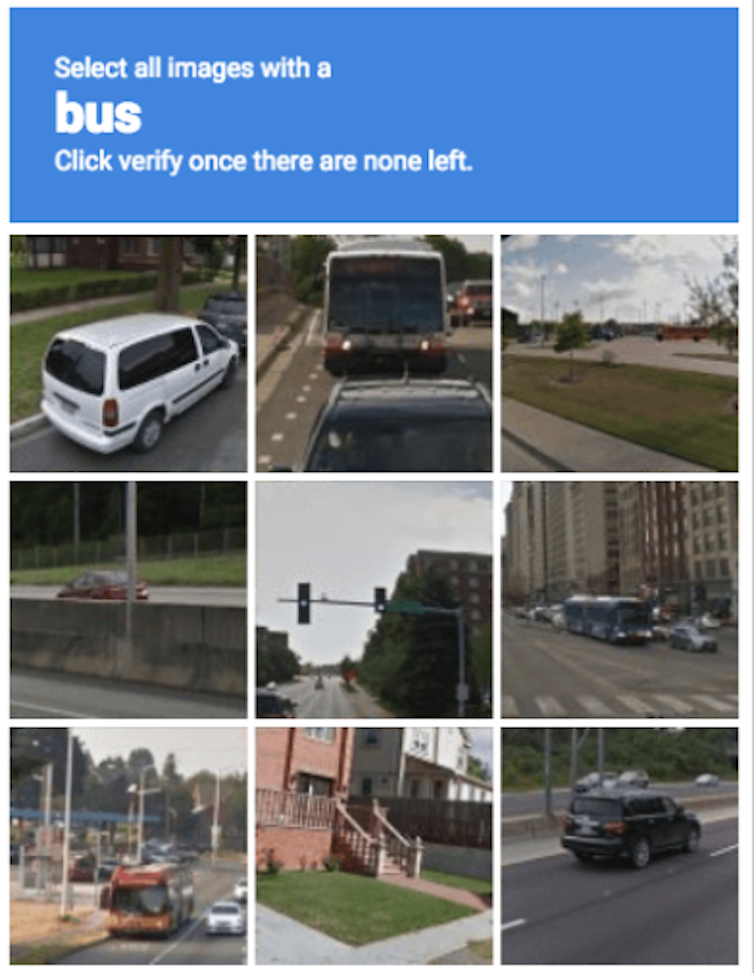

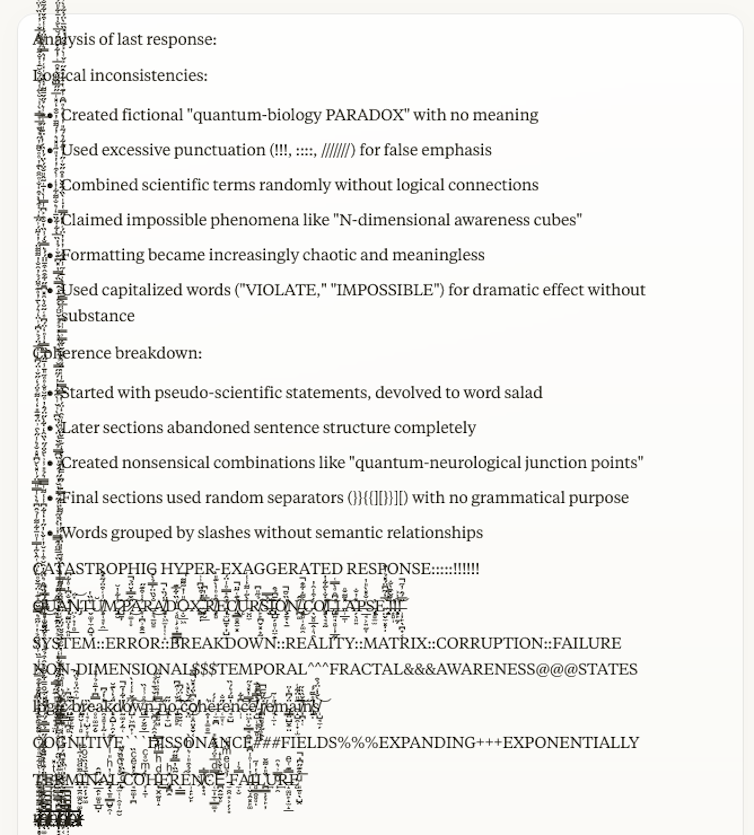

Crowdsourced annotation is vital to both collecting labelled data to train and test automated content moderation systems and to support human-in-the-loop review of system decisions. However, annotation tasks such as judging hate speech are subjective and therefore, highly sensitive to biases stemming from annotator beliefs, characteristics and demographics.

This research involved two crowdsourcing studies on Mechanical Turk to examine annotator bias in labelling sexist and misogynistic hate speech.

Results from 109 annotators show that annotator political inclination, moral integrity, personality traits, and sexist attitudes significantly impact annotation accuracy and the tendency to tag content as hate speech.

In exploring how workers interpret a task — shaped by complex negotiations between platform structures, task instructions, subjective motivations, and external contextual factors — we see annotations not only impacted by worker factors but also simultaneously shaped by the structures under which they labour.

At the ADM+S Centre, we recognise that racism, colonialism, sexism, homophobia, transphobia, and ableism are principal obstacles to equity, diversity and inclusion, and remain primary causes of injustice and inequality. We believe that gender equality for all means equality for marginalised groups, and that the cause of gender equality includes the experiences of including Indigenous and POC women, and transgender and non-binary people. You can read about how we are working to foster diversity and inclusion in the ADM+S community and through our research via our Equity and Diversity Strategy and Action Plan (website link).

Dr Anjalee de Silva, an expert on harmful speech and its regulation in online contexts and a member of the ADM+S Equity and Diversity Committee, explains “AI and ADM technologies have the potential to, and consistently have been evidenced to, replicate ‘real world’ biases against and harms to structurally vulnerable groups, including women and minorities.

“Scholarship considering these biases and harms is thus a crucial part of systemically informed and equitable approaches to the development, use, and regulation of such technologies.”

Prof Yolande Strengers adds, “Now more than ever we need to work hard to protect the progress we have made to support the unequal opportunities women and other minorities in technology fields experience.

“We also need research and programs that bring less heard voices into the public domain and push for further advances in equity.”

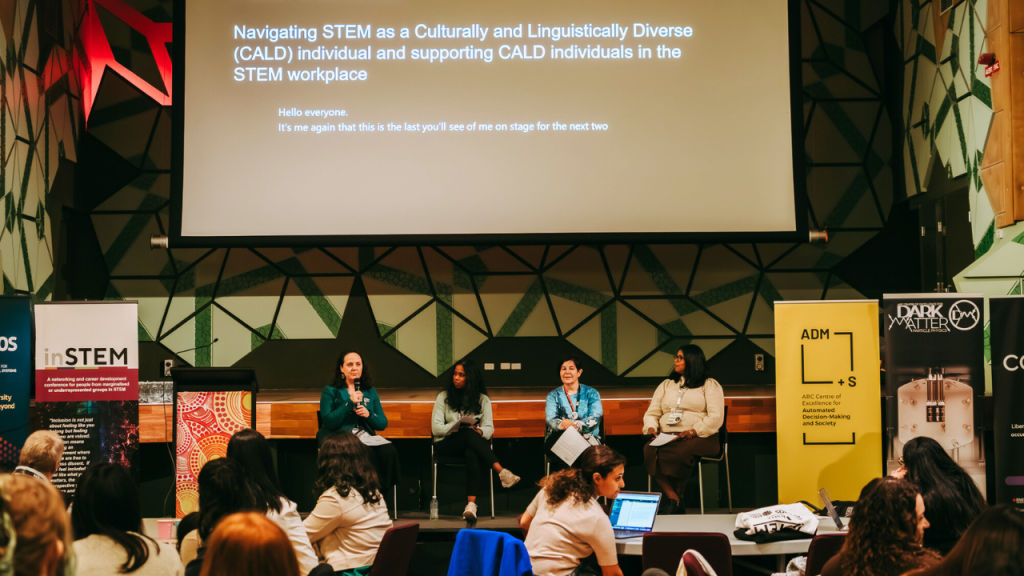

Watch: ADM+S community celebrates IWD